mirror of

https://github.com/simonw/datasette.git

synced 2025-12-10 16:51:24 +01:00

Compare commits

1 commit

main

...

prepare_as

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

93bfa26bfd |

278 changed files with 19857 additions and 56441 deletions

|

|

@ -1,2 +0,0 @@

|

|||

[run]

|

||||

omit = datasette/_version.py, datasette/utils/shutil_backport.py

|

||||

|

|

@ -3,11 +3,10 @@

|

|||

.eggs

|

||||

.gitignore

|

||||

.ipynb_checkpoints

|

||||

.travis.yml

|

||||

build

|

||||

*.spec

|

||||

*.egg-info

|

||||

dist

|

||||

scratchpad

|

||||

venv

|

||||

*.db

|

||||

*.sqlite

|

||||

|

|

|

|||

|

|

@ -1,4 +0,0 @@

|

|||

# Applying Black

|

||||

35d6ee2790e41e96f243c1ff58be0c9c0519a8ce

|

||||

368638555160fb9ac78f462d0f79b1394163fa30

|

||||

2b344f6a34d2adaa305996a1a580ece06397f6e4

|

||||

1

.gitattributes

vendored

1

.gitattributes

vendored

|

|

@ -1 +1,2 @@

|

|||

datasette/_version.py export-subst

|

||||

datasette/static/codemirror-* linguist-vendored

|

||||

|

|

|

|||

1

.github/FUNDING.yml

vendored

1

.github/FUNDING.yml

vendored

|

|

@ -1 +0,0 @@

|

|||

github: [simonw]

|

||||

11

.github/dependabot.yml

vendored

11

.github/dependabot.yml

vendored

|

|

@ -1,11 +0,0 @@

|

|||

version: 2

|

||||

updates:

|

||||

- package-ecosystem: pip

|

||||

directory: "/"

|

||||

schedule:

|

||||

interval: daily

|

||||

time: "13:00"

|

||||

groups:

|

||||

python-packages:

|

||||

patterns:

|

||||

- "*"

|

||||

35

.github/workflows/deploy-branch-preview.yml

vendored

35

.github/workflows/deploy-branch-preview.yml

vendored

|

|

@ -1,35 +0,0 @@

|

|||

name: Deploy a Datasette branch preview to Vercel

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

branch:

|

||||

description: "Branch to deploy"

|

||||

required: true

|

||||

type: string

|

||||

|

||||

jobs:

|

||||

deploy-branch-preview:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- name: Set up Python 3.11

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: "3.11"

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

pip install datasette-publish-vercel

|

||||

- name: Deploy the preview

|

||||

env:

|

||||

VERCEL_TOKEN: ${{ secrets.BRANCH_PREVIEW_VERCEL_TOKEN }}

|

||||

run: |

|

||||

export BRANCH="${{ github.event.inputs.branch }}"

|

||||

wget https://latest.datasette.io/fixtures.db

|

||||

datasette publish vercel fixtures.db \

|

||||

--branch $BRANCH \

|

||||

--project "datasette-preview-$BRANCH" \

|

||||

--token $VERCEL_TOKEN \

|

||||

--scope datasette \

|

||||

--about "Preview of $BRANCH" \

|

||||

--about_url "https://github.com/simonw/datasette/tree/$BRANCH"

|

||||

132

.github/workflows/deploy-latest.yml

vendored

132

.github/workflows/deploy-latest.yml

vendored

|

|

@ -1,132 +0,0 @@

|

|||

name: Deploy latest.datasette.io

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

# - 1.0-dev

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

deploy:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Check out datasette

|

||||

uses: actions/checkout@v5

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: "3.13"

|

||||

cache: pip

|

||||

- name: Install Python dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

python -m pip install -e .[test]

|

||||

python -m pip install -e .[docs]

|

||||

python -m pip install sphinx-to-sqlite==0.1a1

|

||||

- name: Run tests

|

||||

if: ${{ github.ref == 'refs/heads/main' }}

|

||||

run: |

|

||||

pytest -n auto -m "not serial"

|

||||

pytest -m "serial"

|

||||

- name: Build fixtures.db and other files needed to deploy the demo

|

||||

run: |-

|

||||

python tests/fixtures.py \

|

||||

fixtures.db \

|

||||

fixtures-config.json \

|

||||

fixtures-metadata.json \

|

||||

plugins \

|

||||

--extra-db-filename extra_database.db

|

||||

- name: Build docs.db

|

||||

if: ${{ github.ref == 'refs/heads/main' }}

|

||||

run: |-

|

||||

cd docs

|

||||

DISABLE_SPHINX_INLINE_TABS=1 sphinx-build -b xml . _build

|

||||

sphinx-to-sqlite ../docs.db _build

|

||||

cd ..

|

||||

- name: Set up the alternate-route demo

|

||||

run: |

|

||||

echo '

|

||||

from datasette import hookimpl

|

||||

|

||||

@hookimpl

|

||||

def startup(datasette):

|

||||

db = datasette.get_database("fixtures2")

|

||||

db.route = "alternative-route"

|

||||

' > plugins/alternative_route.py

|

||||

cp fixtures.db fixtures2.db

|

||||

- name: And the counters writable canned query demo

|

||||

run: |

|

||||

cat > plugins/counters.py <<EOF

|

||||

from datasette import hookimpl

|

||||

@hookimpl

|

||||

def startup(datasette):

|

||||

db = datasette.add_memory_database("counters")

|

||||

async def inner():

|

||||

await db.execute_write("create table if not exists counters (name text primary key, value integer)")

|

||||

await db.execute_write("insert or ignore into counters (name, value) values ('counter_a', 0)")

|

||||

await db.execute_write("insert or ignore into counters (name, value) values ('counter_b', 0)")

|

||||

await db.execute_write("insert or ignore into counters (name, value) values ('counter_c', 0)")

|

||||

return inner

|

||||

@hookimpl

|

||||

def canned_queries(database):

|

||||

if database == "counters":

|

||||

queries = {}

|

||||

for name in ("counter_a", "counter_b", "counter_c"):

|

||||

queries["increment_{}".format(name)] = {

|

||||

"sql": "update counters set value = value + 1 where name = '{}'".format(name),

|

||||

"on_success_message_sql": "select 'Counter {name} incremented to ' || value from counters where name = '{name}'".format(name=name),

|

||||

"write": True,

|

||||

}

|

||||

queries["decrement_{}".format(name)] = {

|

||||

"sql": "update counters set value = value - 1 where name = '{}'".format(name),

|

||||

"on_success_message_sql": "select 'Counter {name} decremented to ' || value from counters where name = '{name}'".format(name=name),

|

||||

"write": True,

|

||||

}

|

||||

return queries

|

||||

EOF

|

||||

# - name: Make some modifications to metadata.json

|

||||

# run: |

|

||||

# cat fixtures.json | \

|

||||

# jq '.databases |= . + {"ephemeral": {"allow": {"id": "*"}}}' | \

|

||||

# jq '.plugins |= . + {"datasette-ephemeral-tables": {"table_ttl": 900}}' \

|

||||

# > metadata.json

|

||||

# cat metadata.json

|

||||

- id: auth

|

||||

name: Authenticate to Google Cloud

|

||||

uses: google-github-actions/auth@v3

|

||||

with:

|

||||

credentials_json: ${{ secrets.GCP_SA_KEY }}

|

||||

- name: Set up Cloud SDK

|

||||

uses: google-github-actions/setup-gcloud@v3

|

||||

- name: Deploy to Cloud Run

|

||||

env:

|

||||

LATEST_DATASETTE_SECRET: ${{ secrets.LATEST_DATASETTE_SECRET }}

|

||||

run: |-

|

||||

gcloud config set run/region us-central1

|

||||

gcloud config set project datasette-222320

|

||||

export SUFFIX="-${GITHUB_REF#refs/heads/}"

|

||||

export SUFFIX=${SUFFIX#-main}

|

||||

# Replace 1.0 with one-dot-zero in SUFFIX

|

||||

export SUFFIX=${SUFFIX//1.0/one-dot-zero}

|

||||

datasette publish cloudrun fixtures.db fixtures2.db extra_database.db \

|

||||

-m fixtures-metadata.json \

|

||||

--plugins-dir=plugins \

|

||||

--branch=$GITHUB_SHA \

|

||||

--version-note=$GITHUB_SHA \

|

||||

--extra-options="--setting template_debug 1 --setting trace_debug 1 --crossdb" \

|

||||

--install 'datasette-ephemeral-tables>=0.2.2' \

|

||||

--service "datasette-latest$SUFFIX" \

|

||||

--secret $LATEST_DATASETTE_SECRET

|

||||

- name: Deploy to docs as well (only for main)

|

||||

if: ${{ github.ref == 'refs/heads/main' }}

|

||||

run: |-

|

||||

# Deploy docs.db to a different service

|

||||

datasette publish cloudrun docs.db \

|

||||

--branch=$GITHUB_SHA \

|

||||

--version-note=$GITHUB_SHA \

|

||||

--extra-options="--setting template_debug 1" \

|

||||

--service=datasette-docs-latest

|

||||

16

.github/workflows/documentation-links.yml

vendored

16

.github/workflows/documentation-links.yml

vendored

|

|

@ -1,16 +0,0 @@

|

|||

name: Read the Docs Pull Request Preview

|

||||

on:

|

||||

pull_request_target:

|

||||

types:

|

||||

- opened

|

||||

|

||||

permissions:

|

||||

pull-requests: write

|

||||

|

||||

jobs:

|

||||

documentation-links:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: readthedocs/actions/preview@v1

|

||||

with:

|

||||

project-slug: "datasette"

|

||||

25

.github/workflows/prettier.yml

vendored

25

.github/workflows/prettier.yml

vendored

|

|

@ -1,25 +0,0 @@

|

|||

name: Check JavaScript for conformance with Prettier

|

||||

|

||||

on: [push]

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

prettier:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Check out repo

|

||||

uses: actions/checkout@v4

|

||||

- uses: actions/cache@v4

|

||||

name: Configure npm caching

|

||||

with:

|

||||

path: ~/.npm

|

||||

key: ${{ runner.OS }}-npm-${{ hashFiles('**/package-lock.json') }}

|

||||

restore-keys: |

|

||||

${{ runner.OS }}-npm-

|

||||

- name: Install dependencies

|

||||

run: npm ci

|

||||

- name: Run prettier

|

||||

run: |-

|

||||

npm run prettier -- --check

|

||||

109

.github/workflows/publish.yml

vendored

109

.github/workflows/publish.yml

vendored

|

|

@ -1,109 +0,0 @@

|

|||

name: Publish Python Package

|

||||

|

||||

on:

|

||||

release:

|

||||

types: [created]

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

test:

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

matrix:

|

||||

python-version: ["3.10", "3.11", "3.12", "3.13", "3.14"]

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

cache: pip

|

||||

cache-dependency-path: pyproject.toml

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

pip install -e '.[test]'

|

||||

- name: Run tests

|

||||

run: |

|

||||

pytest

|

||||

|

||||

deploy:

|

||||

runs-on: ubuntu-latest

|

||||

needs: [test]

|

||||

environment: release

|

||||

permissions:

|

||||

id-token: write

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: '3.13'

|

||||

cache: pip

|

||||

cache-dependency-path: pyproject.toml

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

pip install setuptools wheel build

|

||||

- name: Build

|

||||

run: |

|

||||

python -m build

|

||||

- name: Publish

|

||||

uses: pypa/gh-action-pypi-publish@release/v1

|

||||

|

||||

deploy_static_docs:

|

||||

runs-on: ubuntu-latest

|

||||

needs: [deploy]

|

||||

if: "!github.event.release.prerelease"

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: '3.10'

|

||||

cache: pip

|

||||

cache-dependency-path: pyproject.toml

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install -e .[docs]

|

||||

python -m pip install sphinx-to-sqlite==0.1a1

|

||||

- name: Build docs.db

|

||||

run: |-

|

||||

cd docs

|

||||

DISABLE_SPHINX_INLINE_TABS=1 sphinx-build -b xml . _build

|

||||

sphinx-to-sqlite ../docs.db _build

|

||||

cd ..

|

||||

- id: auth

|

||||

name: Authenticate to Google Cloud

|

||||

uses: google-github-actions/auth@v2

|

||||

with:

|

||||

credentials_json: ${{ secrets.GCP_SA_KEY }}

|

||||

- name: Set up Cloud SDK

|

||||

uses: google-github-actions/setup-gcloud@v3

|

||||

- name: Deploy stable-docs.datasette.io to Cloud Run

|

||||

run: |-

|

||||

gcloud config set run/region us-central1

|

||||

gcloud config set project datasette-222320

|

||||

datasette publish cloudrun docs.db \

|

||||

--service=datasette-docs-stable

|

||||

|

||||

deploy_docker:

|

||||

runs-on: ubuntu-latest

|

||||

needs: [deploy]

|

||||

if: "!github.event.release.prerelease"

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- name: Build and push to Docker Hub

|

||||

env:

|

||||

DOCKER_USER: ${{ secrets.DOCKER_USER }}

|

||||

DOCKER_PASS: ${{ secrets.DOCKER_PASS }}

|

||||

run: |-

|

||||

sleep 60 # Give PyPI time to make the new release available

|

||||

docker login -u $DOCKER_USER -p $DOCKER_PASS

|

||||

export REPO=datasetteproject/datasette

|

||||

docker build -f Dockerfile \

|

||||

-t $REPO:${GITHUB_REF#refs/tags/} \

|

||||

--build-arg VERSION=${GITHUB_REF#refs/tags/} .

|

||||

docker tag $REPO:${GITHUB_REF#refs/tags/} $REPO:latest

|

||||

docker push $REPO:${GITHUB_REF#refs/tags/}

|

||||

docker push $REPO:latest

|

||||

28

.github/workflows/push_docker_tag.yml

vendored

28

.github/workflows/push_docker_tag.yml

vendored

|

|

@ -1,28 +0,0 @@

|

|||

name: Push specific Docker tag

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

version_tag:

|

||||

description: Tag to build and push

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

deploy_docker:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- name: Build and push to Docker Hub

|

||||

env:

|

||||

DOCKER_USER: ${{ secrets.DOCKER_USER }}

|

||||

DOCKER_PASS: ${{ secrets.DOCKER_PASS }}

|

||||

VERSION_TAG: ${{ github.event.inputs.version_tag }}

|

||||

run: |-

|

||||

docker login -u $DOCKER_USER -p $DOCKER_PASS

|

||||

export REPO=datasetteproject/datasette

|

||||

docker build -f Dockerfile \

|

||||

-t $REPO:${VERSION_TAG} \

|

||||

--build-arg VERSION=${VERSION_TAG} .

|

||||

docker push $REPO:${VERSION_TAG}

|

||||

27

.github/workflows/spellcheck.yml

vendored

27

.github/workflows/spellcheck.yml

vendored

|

|

@ -1,27 +0,0 @@

|

|||

name: Check spelling in documentation

|

||||

|

||||

on: [push, pull_request]

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

spellcheck:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: '3.11'

|

||||

cache: 'pip'

|

||||

cache-dependency-path: '**/pyproject.toml'

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

pip install -e '.[docs]'

|

||||

- name: Check spelling

|

||||

run: |

|

||||

codespell README.md --ignore-words docs/codespell-ignore-words.txt

|

||||

codespell docs/*.rst --ignore-words docs/codespell-ignore-words.txt

|

||||

codespell datasette -S datasette/static --ignore-words docs/codespell-ignore-words.txt

|

||||

codespell tests --ignore-words docs/codespell-ignore-words.txt

|

||||

76

.github/workflows/stable-docs.yml

vendored

76

.github/workflows/stable-docs.yml

vendored

|

|

@ -1,76 +0,0 @@

|

|||

name: Update Stable Docs

|

||||

|

||||

on:

|

||||

release:

|

||||

types: [published]

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

|

||||

permissions:

|

||||

contents: write

|

||||

|

||||

jobs:

|

||||

update_stable_docs:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v5

|

||||

with:

|

||||

fetch-depth: 0 # We need all commits to find docs/ changes

|

||||

- name: Set up Git user

|

||||

run: |

|

||||

git config user.name "Automated"

|

||||

git config user.email "actions@users.noreply.github.com"

|

||||

- name: Create stable branch if it does not yet exist

|

||||

run: |

|

||||

if ! git ls-remote --heads origin stable | grep -qE '\bstable\b'; then

|

||||

# Make sure we have all tags locally

|

||||

git fetch --tags --quiet

|

||||

|

||||

# Latest tag that is just numbers and dots (optionally prefixed with 'v')

|

||||

# e.g., 0.65.2 or v0.65.2 — excludes 1.0a20, 1.0-rc1, etc.

|

||||

LATEST_RELEASE=$(

|

||||

git tag -l --sort=-v:refname \

|

||||

| grep -E '^v?[0-9]+(\.[0-9]+){1,3}$' \

|

||||

| head -n1

|

||||

)

|

||||

|

||||

git checkout -b stable

|

||||

|

||||

# If there are any stable releases, copy docs/ from the most recent

|

||||

if [ -n "$LATEST_RELEASE" ]; then

|

||||

rm -rf docs/

|

||||

git checkout "$LATEST_RELEASE" -- docs/ || true

|

||||

fi

|

||||

|

||||

git commit -m "Populate docs/ from $LATEST_RELEASE" || echo "No changes"

|

||||

git push -u origin stable

|

||||

fi

|

||||

- name: Handle Release

|

||||

if: github.event_name == 'release' && !github.event.release.prerelease

|

||||

run: |

|

||||

git fetch --all

|

||||

git checkout stable

|

||||

git reset --hard ${GITHUB_REF#refs/tags/}

|

||||

git push origin stable --force

|

||||

- name: Handle Commit to Main

|

||||

if: contains(github.event.head_commit.message, '!stable-docs')

|

||||

run: |

|

||||

git fetch origin

|

||||

git checkout -b stable origin/stable

|

||||

# Get the list of modified files in docs/ from the current commit

|

||||

FILES=$(git diff-tree --no-commit-id --name-only -r ${{ github.sha }} -- docs/)

|

||||

# Check if the list of files is non-empty

|

||||

if [[ -n "$FILES" ]]; then

|

||||

# Checkout those files to the stable branch to over-write with their contents

|

||||

for FILE in $FILES; do

|

||||

git checkout ${{ github.sha }} -- $FILE

|

||||

done

|

||||

git add docs/

|

||||

git commit -m "Doc changes from ${{ github.sha }}"

|

||||

git push origin stable

|

||||

else

|

||||

echo "No changes to docs/ in this commit."

|

||||

exit 0

|

||||

fi

|

||||

40

.github/workflows/test-coverage.yml

vendored

40

.github/workflows/test-coverage.yml

vendored

|

|

@ -1,40 +0,0 @@

|

|||

name: Calculate test coverage

|

||||

|

||||

on:

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

pull_request:

|

||||

branches:

|

||||

- main

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

test:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Check out datasette

|

||||

uses: actions/checkout@v4

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: '3.12'

|

||||

cache: 'pip'

|

||||

cache-dependency-path: '**/pyproject.toml'

|

||||

- name: Install Python dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

python -m pip install -e .[test]

|

||||

python -m pip install pytest-cov

|

||||

- name: Run tests

|

||||

run: |-

|

||||

ls -lah

|

||||

cat .coveragerc

|

||||

pytest -m "not serial" --cov=datasette --cov-config=.coveragerc --cov-report xml:coverage.xml --cov-report term -x

|

||||

ls -lah

|

||||

- name: Upload coverage report

|

||||

uses: codecov/codecov-action@v1

|

||||

with:

|

||||

token: ${{ secrets.CODECOV_TOKEN }}

|

||||

file: coverage.xml

|

||||

33

.github/workflows/test-pyodide.yml

vendored

33

.github/workflows/test-pyodide.yml

vendored

|

|

@ -1,33 +0,0 @@

|

|||

name: Test in Pyodide with shot-scraper

|

||||

|

||||

on:

|

||||

push:

|

||||

pull_request:

|

||||

workflow_dispatch:

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

test:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- name: Set up Python 3.10

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: "3.10"

|

||||

cache: 'pip'

|

||||

cache-dependency-path: '**/pyproject.toml'

|

||||

- name: Cache Playwright browsers

|

||||

uses: actions/cache@v4

|

||||

with:

|

||||

path: ~/.cache/ms-playwright/

|

||||

key: ${{ runner.os }}-browsers

|

||||

- name: Install Playwright dependencies

|

||||

run: |

|

||||

pip install shot-scraper build

|

||||

shot-scraper install

|

||||

- name: Run test

|

||||

run: |

|

||||

./test-in-pyodide-with-shot-scraper.sh

|

||||

53

.github/workflows/test-sqlite-support.yml

vendored

53

.github/workflows/test-sqlite-support.yml

vendored

|

|

@ -1,53 +0,0 @@

|

|||

name: Test SQLite versions

|

||||

|

||||

on: [push, pull_request]

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

test:

|

||||

runs-on: ${{ matrix.platform }}

|

||||

continue-on-error: true

|

||||

strategy:

|

||||

matrix:

|

||||

platform: [ubuntu-latest]

|

||||

python-version: ["3.10", "3.11", "3.12", "3.13", "3.14"]

|

||||

sqlite-version: [

|

||||

#"3", # latest version

|

||||

"3.46",

|

||||

#"3.45",

|

||||

#"3.27",

|

||||

#"3.26",

|

||||

"3.25",

|

||||

#"3.25.3", # 2018-09-25, window functions breaks test_upsert for some reason on 3.10, skip for now

|

||||

#"3.24", # 2018-06-04, added UPSERT support

|

||||

#"3.23.1" # 2018-04-10, before UPSERT

|

||||

]

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

allow-prereleases: true

|

||||

cache: pip

|

||||

cache-dependency-path: pyproject.toml

|

||||

- name: Set up SQLite ${{ matrix.sqlite-version }}

|

||||

uses: asg017/sqlite-versions@71ea0de37ae739c33e447af91ba71dda8fcf22e6

|

||||

with:

|

||||

version: ${{ matrix.sqlite-version }}

|

||||

cflags: "-DSQLITE_ENABLE_DESERIALIZE -DSQLITE_ENABLE_FTS5 -DSQLITE_ENABLE_FTS4 -DSQLITE_ENABLE_FTS3_PARENTHESIS -DSQLITE_ENABLE_RTREE -DSQLITE_ENABLE_JSON1"

|

||||

- run: python3 -c "import sqlite3; print(sqlite3.sqlite_version)"

|

||||

- run: echo $LD_LIBRARY_PATH

|

||||

- name: Build extension for --load-extension test

|

||||

run: |-

|

||||

(cd tests && gcc ext.c -fPIC -shared -o ext.so)

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

pip install -e '.[test]'

|

||||

pip freeze

|

||||

- name: Run tests

|

||||

run: |

|

||||

pytest -n auto -m "not serial"

|

||||

pytest -m "serial"

|

||||

51

.github/workflows/test.yml

vendored

51

.github/workflows/test.yml

vendored

|

|

@ -1,51 +0,0 @@

|

|||

name: Test

|

||||

|

||||

on: [push, pull_request]

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

test:

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

matrix:

|

||||

python-version: ["3.10", "3.11", "3.12", "3.13", "3.14"]

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

allow-prereleases: true

|

||||

cache: pip

|

||||

cache-dependency-path: pyproject.toml

|

||||

- name: Build extension for --load-extension test

|

||||

run: |-

|

||||

(cd tests && gcc ext.c -fPIC -shared -o ext.so)

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

pip install -e '.[test]'

|

||||

pip freeze

|

||||

- name: Run tests

|

||||

run: |

|

||||

pytest -n auto -m "not serial"

|

||||

pytest -m "serial"

|

||||

# And the test that exceeds a localhost HTTPS server

|

||||

tests/test_datasette_https_server.sh

|

||||

- name: Install docs dependencies

|

||||

run: |

|

||||

pip install -e '.[docs]'

|

||||

- name: Black

|

||||

run: black --check .

|

||||

- name: Check if cog needs to be run

|

||||

run: |

|

||||

cog --check docs/*.rst

|

||||

- name: Check if blacken-docs needs to be run

|

||||

run: |

|

||||

# This fails on syntax errors, or a diff was applied

|

||||

blacken-docs -l 60 docs/*.rst

|

||||

- name: Test DATASETTE_LOAD_PLUGINS

|

||||

run: |

|

||||

pip install datasette-init datasette-json-html

|

||||

tests/test-datasette-load-plugins.sh

|

||||

15

.github/workflows/tmate-mac.yml

vendored

15

.github/workflows/tmate-mac.yml

vendored

|

|

@ -1,15 +0,0 @@

|

|||

name: tmate session mac

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: macos-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- name: Setup tmate session

|

||||

uses: mxschmitt/action-tmate@v3

|

||||

18

.github/workflows/tmate.yml

vendored

18

.github/workflows/tmate.yml

vendored

|

|

@ -1,18 +0,0 @@

|

|||

name: tmate session

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

models: read

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- name: Setup tmate session

|

||||

uses: mxschmitt/action-tmate@v3

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

11

.gitignore

vendored

11

.gitignore

vendored

|

|

@ -5,9 +5,6 @@ scratchpad

|

|||

|

||||

.vscode

|

||||

|

||||

uv.lock

|

||||

data.db

|

||||

|

||||

# We don't use Pipfile, so ignore them

|

||||

Pipfile

|

||||

Pipfile.lock

|

||||

|

|

@ -119,11 +116,3 @@ ENV/

|

|||

|

||||

# macOS files

|

||||

.DS_Store

|

||||

node_modules

|

||||

.*.swp

|

||||

|

||||

# In case someone compiled tests/ext.c for test_load_extensions, don't

|

||||

# include it in source control.

|

||||

tests/*.dylib

|

||||

tests/*.so

|

||||

tests/*.dll

|

||||

|

|

|

|||

|

|

@ -1,4 +0,0 @@

|

|||

{

|

||||

"tabWidth": 2,

|

||||

"useTabs": false

|

||||

}

|

||||

|

|

@ -1,16 +0,0 @@

|

|||

version: 2

|

||||

|

||||

build:

|

||||

os: ubuntu-20.04

|

||||

tools:

|

||||

python: "3.11"

|

||||

|

||||

sphinx:

|

||||

configuration: docs/conf.py

|

||||

|

||||

python:

|

||||

install:

|

||||

- method: pip

|

||||

path: .

|

||||

extra_requirements:

|

||||

- docs

|

||||

52

.travis.yml

Normal file

52

.travis.yml

Normal file

|

|

@ -0,0 +1,52 @@

|

|||

language: python

|

||||

dist: xenial

|

||||

|

||||

# 3.6 is listed first so it gets used for the later build stages

|

||||

python:

|

||||

- "3.6"

|

||||

- "3.7"

|

||||

- "3.5"

|

||||

|

||||

# Executed for 3.5 AND 3.5 as the first "test" stage:

|

||||

script:

|

||||

- pip install -U pip wheel

|

||||

- pip install .[test]

|

||||

- pytest

|

||||

|

||||

cache:

|

||||

directories:

|

||||

- $HOME/.cache/pip

|

||||

|

||||

# This defines further stages that execute after the tests

|

||||

jobs:

|

||||

include:

|

||||

- stage: deploy latest.datasette.io

|

||||

if: branch = master AND type = push

|

||||

script:

|

||||

- pip install .[test]

|

||||

- npm install -g now

|

||||

- python tests/fixtures.py fixtures.db fixtures.json

|

||||

- export ALIAS=`echo $TRAVIS_COMMIT | cut -c 1-7`

|

||||

- datasette publish nowv1 fixtures.db -m fixtures.json --token=$NOW_TOKEN --branch=$TRAVIS_COMMIT --version-note=$TRAVIS_COMMIT --name=datasette-latest-$ALIAS --alias=latest.datasette.io --alias=$ALIAS.datasette.io

|

||||

- stage: release tagged version

|

||||

if: tag IS present

|

||||

python: 3.6

|

||||

script:

|

||||

- npm install -g now

|

||||

- export ALIAS=`echo $TRAVIS_COMMIT | cut -c 1-7`

|

||||

- export TAG=`echo $TRAVIS_TAG | sed 's/\./-/g' | sed 's/.*/v&/'`

|

||||

- now alias $ALIAS.datasette.io $TAG.datasette.io --token=$NOW_TOKEN

|

||||

# Build and release to Docker Hub

|

||||

- docker login -u $DOCKER_USER -p $DOCKER_PASS

|

||||

- export REPO=datasetteproject/datasette

|

||||

- docker build -f Dockerfile -t $REPO:$TRAVIS_TAG .

|

||||

- docker tag $REPO:$TRAVIS_TAG $REPO:latest

|

||||

- docker push $REPO

|

||||

deploy:

|

||||

- provider: pypi

|

||||

user: simonw

|

||||

distributions: bdist_wheel

|

||||

password: ${PYPI_PASSWORD}

|

||||

on:

|

||||

branch: master

|

||||

tags: true

|

||||

|

|

@ -1,128 +0,0 @@

|

|||

# Contributor Covenant Code of Conduct

|

||||

|

||||

## Our Pledge

|

||||

|

||||

We as members, contributors, and leaders pledge to make participation in our

|

||||

community a harassment-free experience for everyone, regardless of age, body

|

||||

size, visible or invisible disability, ethnicity, sex characteristics, gender

|

||||

identity and expression, level of experience, education, socio-economic status,

|

||||

nationality, personal appearance, race, religion, or sexual identity

|

||||

and orientation.

|

||||

|

||||

We pledge to act and interact in ways that contribute to an open, welcoming,

|

||||

diverse, inclusive, and healthy community.

|

||||

|

||||

## Our Standards

|

||||

|

||||

Examples of behavior that contributes to a positive environment for our

|

||||

community include:

|

||||

|

||||

* Demonstrating empathy and kindness toward other people

|

||||

* Being respectful of differing opinions, viewpoints, and experiences

|

||||

* Giving and gracefully accepting constructive feedback

|

||||

* Accepting responsibility and apologizing to those affected by our mistakes,

|

||||

and learning from the experience

|

||||

* Focusing on what is best not just for us as individuals, but for the

|

||||

overall community

|

||||

|

||||

Examples of unacceptable behavior include:

|

||||

|

||||

* The use of sexualized language or imagery, and sexual attention or

|

||||

advances of any kind

|

||||

* Trolling, insulting or derogatory comments, and personal or political attacks

|

||||

* Public or private harassment

|

||||

* Publishing others' private information, such as a physical or email

|

||||

address, without their explicit permission

|

||||

* Other conduct which could reasonably be considered inappropriate in a

|

||||

professional setting

|

||||

|

||||

## Enforcement Responsibilities

|

||||

|

||||

Community leaders are responsible for clarifying and enforcing our standards of

|

||||

acceptable behavior and will take appropriate and fair corrective action in

|

||||

response to any behavior that they deem inappropriate, threatening, offensive,

|

||||

or harmful.

|

||||

|

||||

Community leaders have the right and responsibility to remove, edit, or reject

|

||||

comments, commits, code, wiki edits, issues, and other contributions that are

|

||||

not aligned to this Code of Conduct, and will communicate reasons for moderation

|

||||

decisions when appropriate.

|

||||

|

||||

## Scope

|

||||

|

||||

This Code of Conduct applies within all community spaces, and also applies when

|

||||

an individual is officially representing the community in public spaces.

|

||||

Examples of representing our community include using an official e-mail address,

|

||||

posting via an official social media account, or acting as an appointed

|

||||

representative at an online or offline event.

|

||||

|

||||

## Enforcement

|

||||

|

||||

Instances of abusive, harassing, or otherwise unacceptable behavior may be

|

||||

reported to the community leaders responsible for enforcement at

|

||||

`swillison+datasette-code-of-conduct@gmail.com`.

|

||||

All complaints will be reviewed and investigated promptly and fairly.

|

||||

|

||||

All community leaders are obligated to respect the privacy and security of the

|

||||

reporter of any incident.

|

||||

|

||||

## Enforcement Guidelines

|

||||

|

||||

Community leaders will follow these Community Impact Guidelines in determining

|

||||

the consequences for any action they deem in violation of this Code of Conduct:

|

||||

|

||||

### 1. Correction

|

||||

|

||||

**Community Impact**: Use of inappropriate language or other behavior deemed

|

||||

unprofessional or unwelcome in the community.

|

||||

|

||||

**Consequence**: A private, written warning from community leaders, providing

|

||||

clarity around the nature of the violation and an explanation of why the

|

||||

behavior was inappropriate. A public apology may be requested.

|

||||

|

||||

### 2. Warning

|

||||

|

||||

**Community Impact**: A violation through a single incident or series

|

||||

of actions.

|

||||

|

||||

**Consequence**: A warning with consequences for continued behavior. No

|

||||

interaction with the people involved, including unsolicited interaction with

|

||||

those enforcing the Code of Conduct, for a specified period of time. This

|

||||

includes avoiding interactions in community spaces as well as external channels

|

||||

like social media. Violating these terms may lead to a temporary or

|

||||

permanent ban.

|

||||

|

||||

### 3. Temporary Ban

|

||||

|

||||

**Community Impact**: A serious violation of community standards, including

|

||||

sustained inappropriate behavior.

|

||||

|

||||

**Consequence**: A temporary ban from any sort of interaction or public

|

||||

communication with the community for a specified period of time. No public or

|

||||

private interaction with the people involved, including unsolicited interaction

|

||||

with those enforcing the Code of Conduct, is allowed during this period.

|

||||

Violating these terms may lead to a permanent ban.

|

||||

|

||||

### 4. Permanent Ban

|

||||

|

||||

**Community Impact**: Demonstrating a pattern of violation of community

|

||||

standards, including sustained inappropriate behavior, harassment of an

|

||||

individual, or aggression toward or disparagement of classes of individuals.

|

||||

|

||||

**Consequence**: A permanent ban from any sort of public interaction within

|

||||

the community.

|

||||

|

||||

## Attribution

|

||||

|

||||

This Code of Conduct is adapted from the [Contributor Covenant][homepage],

|

||||

version 2.0, available at

|

||||

https://www.contributor-covenant.org/version/2/0/code_of_conduct.html.

|

||||

|

||||

Community Impact Guidelines were inspired by [Mozilla's code of conduct

|

||||

enforcement ladder](https://github.com/mozilla/diversity).

|

||||

|

||||

[homepage]: https://www.contributor-covenant.org

|

||||

|

||||

For answers to common questions about this code of conduct, see the FAQ at

|

||||

https://www.contributor-covenant.org/faq. Translations are available at

|

||||

https://www.contributor-covenant.org/translations.

|

||||

48

Dockerfile

48

Dockerfile

|

|

@ -1,18 +1,42 @@

|

|||

FROM python:3.11.0-slim-bullseye as build

|

||||

FROM python:3.7.2-slim-stretch as build

|

||||

|

||||

# Version of Datasette to install, e.g. 0.55

|

||||

# docker build . -t datasette --build-arg VERSION=0.55

|

||||

ARG VERSION

|

||||

# Setup build dependencies

|

||||

RUN apt update \

|

||||

&& apt install -y python3-dev build-essential wget libxml2-dev libproj-dev libgeos-dev libsqlite3-dev zlib1g-dev pkg-config git \

|

||||

&& apt clean

|

||||

|

||||

RUN apt-get update && \

|

||||

apt-get install -y --no-install-recommends libsqlite3-mod-spatialite && \

|

||||

apt clean && \

|

||||

rm -rf /var/lib/apt && \

|

||||

rm -rf /var/lib/dpkg/info/*

|

||||

|

||||

RUN pip install https://github.com/simonw/datasette/archive/refs/tags/${VERSION}.zip && \

|

||||

find /usr/local/lib -name '__pycache__' | xargs rm -r && \

|

||||

rm -rf /root/.cache/pip

|

||||

RUN wget "https://www.sqlite.org/2018/sqlite-autoconf-3260000.tar.gz" && tar xzf sqlite-autoconf-3260000.tar.gz \

|

||||

&& cd sqlite-autoconf-3260000 && ./configure --disable-static --enable-fts5 --enable-json1 CFLAGS="-g -O2 -DSQLITE_ENABLE_FTS3=1 -DSQLITE_ENABLE_FTS4=1 -DSQLITE_ENABLE_RTREE=1 -DSQLITE_ENABLE_JSON1" \

|

||||

&& make && make install

|

||||

|

||||

RUN wget "https://www.gaia-gis.it/gaia-sins/freexl-1.0.5.tar.gz" && tar zxf freexl-1.0.5.tar.gz \

|

||||

&& cd freexl-1.0.5 && ./configure && make && make install

|

||||

|

||||

RUN wget "https://www.gaia-gis.it/gaia-sins/libspatialite-4.4.0-RC0.tar.gz" && tar zxf libspatialite-4.4.0-RC0.tar.gz \

|

||||

&& cd libspatialite-4.4.0-RC0 && ./configure && make && make install

|

||||

|

||||

RUN wget "https://www.gaia-gis.it/gaia-sins/readosm-1.1.0.tar.gz" && tar zxf readosm-1.1.0.tar.gz && cd readosm-1.1.0 && ./configure && make && make install

|

||||

|

||||

RUN wget "https://www.gaia-gis.it/gaia-sins/spatialite-tools-4.4.0-RC0.tar.gz" && tar zxf spatialite-tools-4.4.0-RC0.tar.gz \

|

||||

&& cd spatialite-tools-4.4.0-RC0 && ./configure && make && make install

|

||||

|

||||

|

||||

# Add local code to the image instead of fetching from pypi.

|

||||

COPY . /datasette

|

||||

|

||||

RUN pip install /datasette

|

||||

|

||||

FROM python:3.7.2-slim-stretch

|

||||

|

||||

# Copy python dependencies and spatialite libraries

|

||||

COPY --from=build /usr/local/lib/ /usr/local/lib/

|

||||

# Copy executables

|

||||

COPY --from=build /usr/local/bin /usr/local/bin

|

||||

# Copy spatial extensions

|

||||

COPY --from=build /usr/lib/x86_64-linux-gnu /usr/lib/x86_64-linux-gnu

|

||||

|

||||

ENV LD_LIBRARY_PATH=/usr/local/lib

|

||||

|

||||

EXPOSE 8001

|

||||

CMD ["datasette"]

|

||||

|

|

|

|||

56

Justfile

56

Justfile

|

|

@ -1,56 +0,0 @@

|

|||

export DATASETTE_SECRET := "not_a_secret"

|

||||

|

||||

# Run tests and linters

|

||||

@default: test lint

|

||||

|

||||

# Setup project

|

||||

@init:

|

||||

uv sync --extra test --extra docs

|

||||

|

||||

# Run pytest with supplied options

|

||||

@test *options: init

|

||||

uv run pytest -n auto {{options}}

|

||||

|

||||

@codespell:

|

||||

uv run codespell README.md --ignore-words docs/codespell-ignore-words.txt

|

||||

uv run codespell docs/*.rst --ignore-words docs/codespell-ignore-words.txt

|

||||

uv run codespell datasette -S datasette/static --ignore-words docs/codespell-ignore-words.txt

|

||||

uv run codespell tests --ignore-words docs/codespell-ignore-words.txt

|

||||

|

||||

# Run linters: black, flake8, mypy, cog

|

||||

@lint: codespell

|

||||

uv run black . --check

|

||||

uv run flake8

|

||||

uv run --extra test cog --check README.md docs/*.rst

|

||||

|

||||

# Rebuild docs with cog

|

||||

@cog:

|

||||

uv run --extra test cog -r README.md docs/*.rst

|

||||

|

||||

# Serve live docs on localhost:8000

|

||||

@docs: cog blacken-docs

|

||||

uv run --extra docs make -C docs livehtml

|

||||

|

||||

# Build docs as static HTML

|

||||

@docs-build: cog blacken-docs

|

||||

rm -rf docs/_build && cd docs && uv run make html

|

||||

|

||||

# Apply Black

|

||||

@black:

|

||||

uv run black .

|

||||

|

||||

# Apply blacken-docs

|

||||

@blacken-docs:

|

||||

uv run blacken-docs -l 60 docs/*.rst

|

||||

|

||||

# Apply prettier

|

||||

@prettier:

|

||||

npm run fix

|

||||

|

||||

# Format code with both black and prettier

|

||||

@format: black prettier blacken-docs

|

||||

|

||||

@serve *options:

|

||||

uv run sqlite-utils create-database data.db

|

||||

uv run sqlite-utils create-table data.db docs id integer title text --pk id --ignore

|

||||

uv run python -m datasette data.db --root --reload {{options}}

|

||||

|

|

@ -1,5 +1,3 @@

|

|||

recursive-include datasette/static *

|

||||

recursive-include datasette/templates *

|

||||

include versioneer.py

|

||||

include datasette/_version.py

|

||||

include LICENSE

|

||||

|

|

|

|||

118

README.md

118

README.md

|

|

@ -1,42 +1,69 @@

|

|||

<img src="https://datasette.io/static/datasette-logo.svg" alt="Datasette">

|

||||

# Datasette

|

||||

|

||||

[](https://pypi.org/project/datasette/)

|

||||

[](https://docs.datasette.io/en/latest/changelog.html)

|

||||

[](https://pypi.org/project/datasette/)

|

||||

[](https://github.com/simonw/datasette/actions?query=workflow%3ATest)

|

||||

[](https://docs.datasette.io/en/latest/?badge=latest)

|

||||

[](https://github.com/simonw/datasette/blob/main/LICENSE)

|

||||

[](https://hub.docker.com/r/datasetteproject/datasette)

|

||||

[](https://datasette.io/discord)

|

||||

[](https://travis-ci.org/simonw/datasette)

|

||||

[](http://datasette.readthedocs.io/en/latest/?badge=latest)

|

||||

[](https://github.com/simonw/datasette/blob/master/LICENSE)

|

||||

[](https://black.readthedocs.io/en/stable/)

|

||||

|

||||

*An open source multi-tool for exploring and publishing data*

|

||||

*A tool for exploring and publishing data*

|

||||

|

||||

Datasette is a tool for exploring and publishing data. It helps people take data of any shape or size and publish that as an interactive, explorable website and accompanying API.

|

||||

|

||||

Datasette is aimed at data journalists, museum curators, archivists, local governments, scientists, researchers and anyone else who has data that they wish to share with the world.

|

||||

Datasette is aimed at data journalists, museum curators, archivists, local governments and anyone else who has data that they wish to share with the world.

|

||||

|

||||

[Explore a demo](https://datasette.io/global-power-plants/global-power-plants), watch [a video about the project](https://simonwillison.net/2021/Feb/7/video/) or try it out [on GitHub Codespaces](https://github.com/datasette/datasette-studio).

|

||||

[Explore a demo](https://fivethirtyeight.datasettes.com/fivethirtyeight), watch [a video about the project](https://www.youtube.com/watch?v=pTr1uLQTJNE) or try it out by [uploading and publishing your own CSV data](https://simonwillison.net/2019/Apr/23/datasette-glitch/).

|

||||

|

||||

* [datasette.io](https://datasette.io/) is the official project website

|

||||

* Latest [Datasette News](https://datasette.io/news)

|

||||

* Comprehensive documentation: https://docs.datasette.io/

|

||||

* Examples: https://datasette.io/examples

|

||||

* Live demo of current `main` branch: https://latest.datasette.io/

|

||||

* Questions, feedback or want to talk about the project? Join our [Discord](https://datasette.io/discord)

|

||||

* Comprehensive documentation: http://datasette.readthedocs.io/

|

||||

* Examples: https://github.com/simonw/datasette/wiki/Datasettes

|

||||

* Live demo of current master: https://latest.datasette.io/

|

||||

|

||||

Want to stay up-to-date with the project? Subscribe to the [Datasette newsletter](https://datasette.substack.com/) for tips, tricks and news on what's new in the Datasette ecosystem.

|

||||

## News

|

||||

|

||||

* 23rd June 2019: [Porting Datasette to ASGI, and Turtles all the way down](https://simonwillison.net/2019/Jun/23/datasette-asgi/)

|

||||

* 21st May 2019: The anonymized raw data from [the Stack Overflow Developer Survey 2019](https://stackoverflow.blog/2019/05/21/public-data-release-of-stack-overflows-2019-developer-survey/) has been [published in partnership with Glitch](https://glitch.com/culture/discover-insights-explore-developer-survey-results-2019/), powered by Datasette.

|

||||

* 19th May 2019: [Datasette 0.28](https://datasette.readthedocs.io/en/stable/changelog.html#v0-28) - a salmagundi of new features!

|

||||

* No longer immutable! Datasette now supports [databases that change](https://datasette.readthedocs.io/en/stable/changelog.html#supporting-databases-that-change).

|

||||

* [Faceting improvements](https://datasette.readthedocs.io/en/stable/changelog.html#faceting-improvements-and-faceting-plugins) including facet-by-JSON-array and the ability to define custom faceting using plugins.

|

||||

* [datasette publish cloudrun](https://datasette.readthedocs.io/en/stable/changelog.html#datasette-publish-cloudrun) lets you publish databases to Google's new Cloud Run hosting service.

|

||||

* New [register_output_renderer](https://datasette.readthedocs.io/en/stable/changelog.html#register-output-renderer-plugins) plugin hook for adding custom output extensions to Datasette in addition to the default `.json` and `.csv`.

|

||||

* Dozens of other smaller features and tweaks - see [the release notes](https://datasette.readthedocs.io/en/stable/changelog.html#v0-28) for full details.

|

||||

* Read more about this release here: [Datasette 0.28—and why master should always be releasable](https://simonwillison.net/2019/May/19/datasette-0-28/)

|

||||

* 24th February 2019: [

|

||||

sqlite-utils: a Python library and CLI tool for building SQLite databases](https://simonwillison.net/2019/Feb/25/sqlite-utils/) - a partner tool for easily creating SQLite databases for use with Datasette.

|

||||

* 31st Janary 2019: [Datasette 0.27](https://datasette.readthedocs.io/en/latest/changelog.html#v0-27) - `datasette plugins` command, newline-delimited JSON export option, new documentation on [The Datasette Ecosystem](https://datasette.readthedocs.io/en/latest/ecosystem.html).

|

||||

* 10th January 2019: [Datasette 0.26.1](http://datasette.readthedocs.io/en/latest/changelog.html#v0-26-1) - SQLite upgrade in Docker image, `/-/versions` now shows SQLite compile options.

|

||||

* 2nd January 2019: [Datasette 0.26](http://datasette.readthedocs.io/en/latest/changelog.html#v0-26) - minor bug fixes, `datasette publish now --alias` argument.

|

||||

* 18th December 2018: [Fast Autocomplete Search for Your Website](https://24ways.org/2018/fast-autocomplete-search-for-your-website/) - a new tutorial on using Datasette to build a JavaScript autocomplete search engine.

|

||||

* 3rd October 2018: [The interesting ideas in Datasette](https://simonwillison.net/2018/Oct/4/datasette-ideas/) - a write-up of some of the less obvious interesting ideas embedded in the Datasette project.

|

||||

* 19th September 2018: [Datasette 0.25](http://datasette.readthedocs.io/en/latest/changelog.html#v0-25) - New plugin hooks, improved database view support and an easier way to use more recent versions of SQLite.

|

||||

* 23rd July 2018: [Datasette 0.24](http://datasette.readthedocs.io/en/latest/changelog.html#v0-24) - a number of small new features

|

||||

* 29th June 2018: [datasette-vega](https://github.com/simonw/datasette-vega), a new plugin for visualizing data as bar, line or scatter charts

|

||||

* 21st June 2018: [Datasette 0.23.1](http://datasette.readthedocs.io/en/latest/changelog.html#v0-23-1) - minor bug fixes

|

||||

* 18th June 2018: [Datasette 0.23: CSV, SpatiaLite and more](http://datasette.readthedocs.io/en/latest/changelog.html#v0-23) - CSV export, foreign key expansion in JSON and CSV, new config options, improved support for SpatiaLite and a bunch of other improvements

|

||||

* 23rd May 2018: [Datasette 0.22.1 bugfix](https://github.com/simonw/datasette/releases/tag/0.22.1) plus we now use [versioneer](https://github.com/warner/python-versioneer)

|

||||

* 20th May 2018: [Datasette 0.22: Datasette Facets](https://simonwillison.net/2018/May/20/datasette-facets)

|

||||

* 5th May 2018: [Datasette 0.21: New _shape=, new _size=, search within columns](https://github.com/simonw/datasette/releases/tag/0.21)

|

||||

* 25th April 2018: [Exploring the UK Register of Members Interests with SQL and Datasette](https://simonwillison.net/2018/Apr/25/register-members-interests/) - a tutorial describing how [register-of-members-interests.datasettes.com](https://register-of-members-interests.datasettes.com/) was built ([source code here](https://github.com/simonw/register-of-members-interests))

|

||||

* 20th April 2018: [Datasette plugins, and building a clustered map visualization](https://simonwillison.net/2018/Apr/20/datasette-plugins/) - introducing Datasette's new plugin system and [datasette-cluster-map](https://pypi.org/project/datasette-cluster-map/), a plugin for visualizing data on a map

|

||||

* 20th April 2018: [Datasette 0.20: static assets and templates for plugins](https://github.com/simonw/datasette/releases/tag/0.20)

|

||||

* 16th April 2018: [Datasette 0.19: plugins preview](https://github.com/simonw/datasette/releases/tag/0.19)

|

||||

* 14th April 2018: [Datasette 0.18: units](https://github.com/simonw/datasette/releases/tag/0.18)

|

||||

* 9th April 2018: [Datasette 0.15: sort by column](https://github.com/simonw/datasette/releases/tag/0.15)

|

||||

* 28th March 2018: [Baltimore Sun Public Salary Records](https://simonwillison.net/2018/Mar/28/datasette-in-the-wild/) - a data journalism project from the Baltimore Sun powered by Datasette - source code [is available here](https://github.com/baltimore-sun-data/salaries-datasette)

|

||||

* 27th March 2018: [Cloud-first: Rapid webapp deployment using containers](https://wwwf.imperial.ac.uk/blog/research-software-engineering/2018/03/27/cloud-first-rapid-webapp-deployment-using-containers/) - a tutorial covering deploying Datasette using Microsoft Azure by the Research Software Engineering team at Imperial College London

|

||||

* 28th January 2018: [Analyzing my Twitter followers with Datasette](https://simonwillison.net/2018/Jan/28/analyzing-my-twitter-followers/) - a tutorial on using Datasette to analyze follower data pulled from the Twitter API

|

||||

* 17th January 2018: [Datasette Publish: a web app for publishing CSV files as an online database](https://simonwillison.net/2018/Jan/17/datasette-publish/)

|

||||

* 12th December 2017: [Building a location to time zone API with SpatiaLite, OpenStreetMap and Datasette](https://simonwillison.net/2017/Dec/12/building-a-location-time-zone-api/)

|

||||

* 9th December 2017: [Datasette 0.14: customization edition](https://github.com/simonw/datasette/releases/tag/0.14)

|

||||

* 25th November 2017: [New in Datasette: filters, foreign keys and search](https://simonwillison.net/2017/Nov/25/new-in-datasette/)

|

||||

* 13th November 2017: [Datasette: instantly create and publish an API for your SQLite databases](https://simonwillison.net/2017/Nov/13/datasette/)

|

||||

|

||||

## Installation

|

||||

|

||||

If you are on a Mac, [Homebrew](https://brew.sh/) is the easiest way to install Datasette:

|

||||

pip3 install datasette

|

||||

|

||||

brew install datasette

|

||||

|

||||

You can also install it using `pip` or `pipx`:

|

||||

|

||||

pip install datasette

|

||||

|

||||

Datasette requires Python 3.8 or higher. We also have [detailed installation instructions](https://docs.datasette.io/en/stable/installation.html) covering other options such as Docker.

|

||||

Datasette requires Python 3.5 or higher. We also have [detailed installation instructions](https://datasette.readthedocs.io/en/stable/installation.html) covering other options such as Docker.

|

||||

|

||||

## Basic usage

|

||||

|

||||

|

|

@ -48,12 +75,41 @@ This will start a web server on port 8001 - visit http://localhost:8001/ to acce

|

|||

|

||||

Use Chrome on OS X? You can run datasette against your browser history like so:

|

||||

|

||||

datasette ~/Library/Application\ Support/Google/Chrome/Default/History --nolock

|

||||

datasette ~/Library/Application\ Support/Google/Chrome/Default/History

|

||||

|

||||

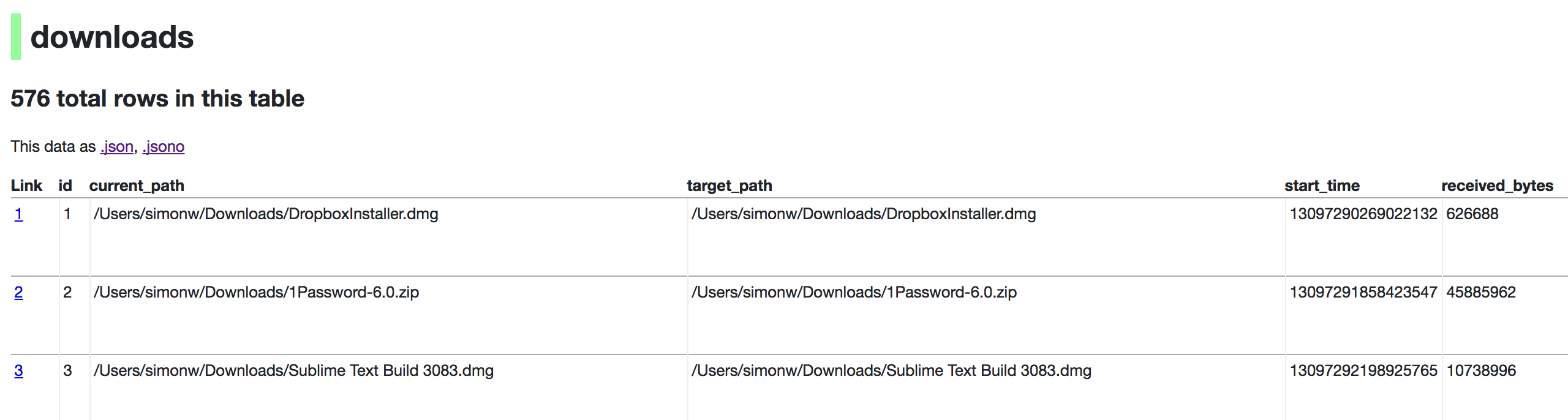

Now visiting http://localhost:8001/History/downloads will show you a web interface to browse your downloads data:

|

||||

|

||||

|

||||

|

||||

## datasette serve options

|

||||

|

||||

$ datasette serve --help

|

||||

|

||||

Usage: datasette serve [OPTIONS] [FILES]...

|

||||

|

||||

Serve up specified SQLite database files with a web UI

|

||||

|

||||

Options:

|

||||

-i, --immutable PATH Database files to open in immutable mode

|

||||

-h, --host TEXT host for server, defaults to 127.0.0.1

|

||||

-p, --port INTEGER port for server, defaults to 8001

|

||||

--debug Enable debug mode - useful for development

|

||||

--reload Automatically reload if database or code change detected -

|

||||

useful for development

|

||||

--cors Enable CORS by serving Access-Control-Allow-Origin: *

|

||||

--load-extension PATH Path to a SQLite extension to load

|

||||

--inspect-file TEXT Path to JSON file created using "datasette inspect"

|

||||

-m, --metadata FILENAME Path to JSON file containing license/source metadata

|

||||

--template-dir DIRECTORY Path to directory containing custom templates

|

||||

--plugins-dir DIRECTORY Path to directory containing custom plugins

|

||||

--static STATIC MOUNT mountpoint:path-to-directory for serving static files

|

||||

--memory Make :memory: database available

|

||||

--config CONFIG Set config option using configname:value

|

||||

datasette.readthedocs.io/en/latest/config.html

|

||||

--version-note TEXT Additional note to show on /-/versions

|

||||

--help-config Show available config options

|

||||

--help Show this message and exit.

|

||||

|

||||

## metadata.json

|

||||

|

||||

If you want to include licensing and source information in the generated datasette website you can do so using a JSON file that looks something like this:

|

||||

|

|

@ -74,7 +130,7 @@ The license and source information will be displayed on the index page and in th

|

|||

|

||||

## datasette publish

|

||||

|

||||

If you have [Heroku](https://heroku.com/) or [Google Cloud Run](https://cloud.google.com/run/) configured, Datasette can deploy one or more SQLite databases to the internet with a single command:

|

||||

If you have [Heroku](https://heroku.com/), [Google Cloud Run](https://cloud.google.com/run/) or [Zeit Now v1](https://zeit.co/now) configured, Datasette can deploy one or more SQLite databases to the internet with a single command:

|

||||

|

||||

datasette publish heroku database.db

|

||||

|

||||

|

|

@ -84,8 +140,4 @@ Or:

|

|||

|

||||

This will create a docker image containing both the datasette application and the specified SQLite database files. It will then deploy that image to Heroku or Cloud Run and give you a URL to access the resulting website and API.

|

||||

|

||||

See [Publishing data](https://docs.datasette.io/en/stable/publish.html) in the documentation for more details.

|

||||

|

||||

## Datasette Lite

|

||||

|

||||

[Datasette Lite](https://lite.datasette.io/) is Datasette packaged using WebAssembly so that it runs entirely in your browser, no Python web application server required. Read more about that in the [Datasette Lite documentation](https://github.com/simonw/datasette-lite/blob/main/README.md).

|

||||

See [Publishing data](https://datasette.readthedocs.io/en/stable/publish.html) in the documentation for more details.

|

||||

|

|

|

|||

1

_config.yml

Normal file

1

_config.yml

Normal file

|

|

@ -0,0 +1 @@

|

|||

theme: jekyll-theme-architect

|

||||

|

|

@ -1,8 +0,0 @@

|

|||

coverage:

|

||||

status:

|

||||

project:

|

||||

default:

|

||||

informational: true

|

||||

patch:

|

||||

default:

|

||||

informational: true

|

||||

|

|

@ -1,8 +1,3 @@

|

|||

from datasette.permissions import Permission # noqa

|

||||

from datasette.version import __version_info__, __version__ # noqa

|

||||

from datasette.events import Event # noqa

|

||||

from datasette.utils.asgi import Forbidden, NotFound, Request, Response # noqa

|

||||

from datasette.utils import actor_matches_allow # noqa

|

||||

from datasette.views import Context # noqa

|

||||

from .hookspecs import hookimpl # noqa

|

||||

from .hookspecs import hookspec # noqa

|

||||

|

|

|

|||

|

|

@ -1,4 +0,0 @@

|

|||

from datasette.cli import cli

|

||||

|

||||

if __name__ == "__main__":

|

||||

cli()

|

||||

556

datasette/_version.py

Normal file

556

datasette/_version.py

Normal file

|

|

@ -0,0 +1,556 @@

|

|||

# This file helps to compute a version number in source trees obtained from

|

||||

# git-archive tarball (such as those provided by githubs download-from-tag

|

||||

# feature). Distribution tarballs (built by setup.py sdist) and build

|

||||

# directories (produced by setup.py build) will contain a much shorter file

|

||||

# that just contains the computed version number.

|

||||

|

||||

# This file is released into the public domain. Generated by

|

||||

# versioneer-0.18 (https://github.com/warner/python-versioneer)

|

||||

|

||||

"""Git implementation of _version.py."""

|

||||

|

||||

import errno

|

||||

import os

|

||||

import re

|

||||

import subprocess

|

||||

import sys

|

||||

|

||||

|

||||

def get_keywords():

|

||||

"""Get the keywords needed to look up the version information."""

|

||||

# these strings will be replaced by git during git-archive.

|

||||

# setup.py/versioneer.py will grep for the variable names, so they must

|

||||

# each be defined on a line of their own. _version.py will just call

|

||||

# get_keywords().

|

||||

git_refnames = "$Format:%d$"

|